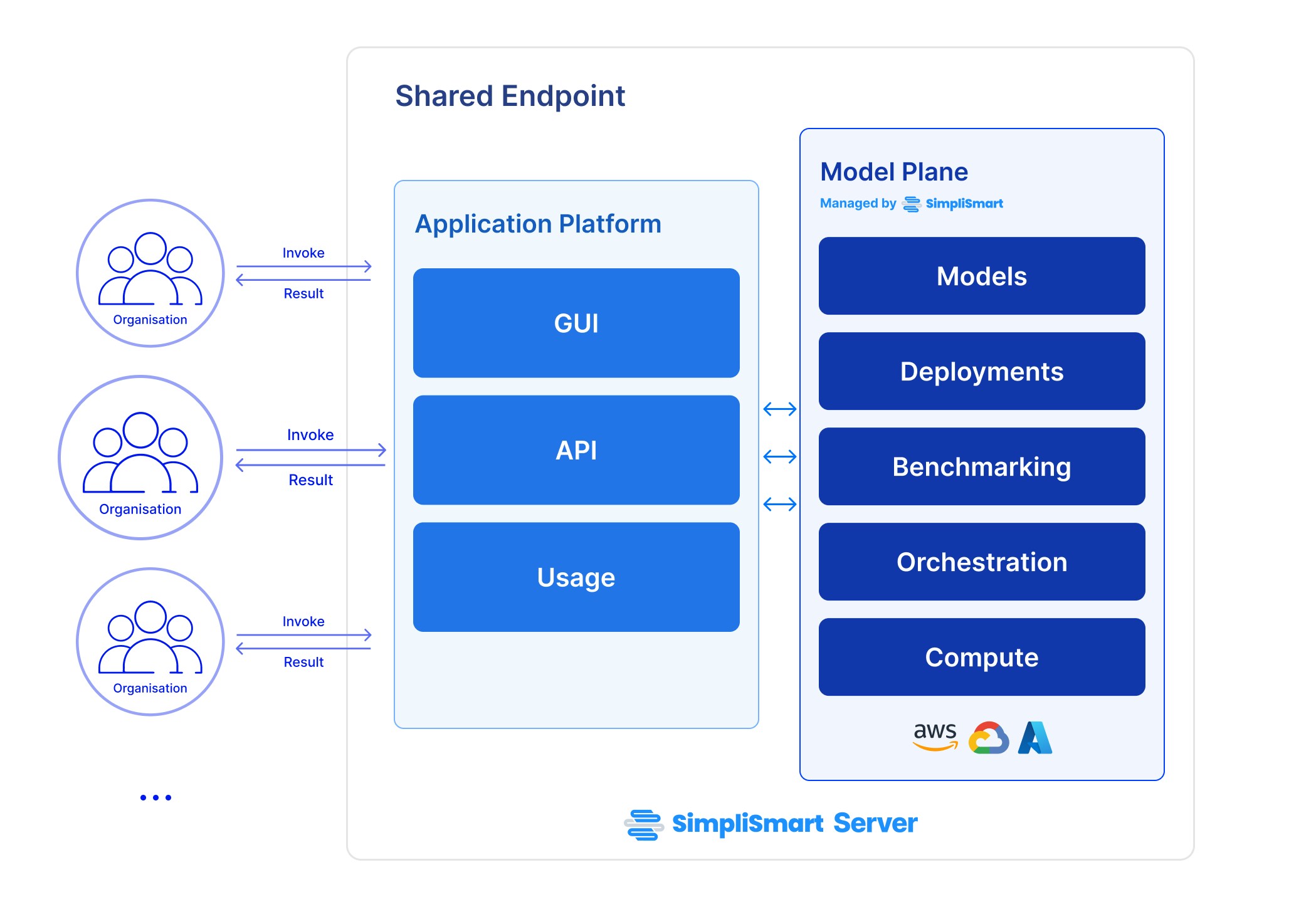

Shared endpoints allow you to invoke ML models using our shared infrastructure. This is a cost-effective solution for users who do not require dedicated resources.Documentation Index

Fetch the complete documentation index at: https://docs.simplismart.ai/llms.txt

Use this file to discover all available pages before exploring further.

Using a shared endpoint

Inferencing any model on a shared endpoint is straightforward. You can directly use our Playground or perform an API call. Want a dedicated infra for better SLAs? Check out Private Endpoints.For shared endpoints, pricing is determined by the number of API calls and actual usage, For detailed pricing information, refer to the Pricing section on our website.