For custom models or custom pipelines, you must prepare the model configuration before adding the model to the platform. This includes defining the model logic, dependencies, and runtime environment. The platform expects all required files to be packaged together and provided as a single artifact (a ZIP file). This page describes how to implement the model interface, define runtime configuration, package your model, and add it through the Simplismart platform.Documentation Index

Fetch the complete documentation index at: https://docs.simplismart.ai/llms.txt

Use this file to discover all available pages before exploring further.

Model interface (model.py)

Your custom model must implement a standard interface so the platform can load and run it correctly.

Method requirements:

load(): Handles model initialization and weight loadingpreprocess(): Optional input preprocessingpredict(): Core inference logicpostprocess(): Optional output formatting

Runtime configuration (config.yaml)

The config.yaml file defines the execution environment for the custom model.

| Section | Purpose |

|---|---|

python_version | Python runtime version |

environment_variables | Custom environment variables (if any) |

requirements | Python dependencies |

system_packages | OS-level packages |

custom_setup_script | Optional setup script executed during build |

Packaging the custom model

Before adding the model to the platform, package all required files into a single ZIP file.Create a single directory

Place the following in one folder:

model.pyconfig.yaml- Any additional scripts or assets (e.g.

script.sh)

Upload your trained model to AWS S3 or GCP GCS, share the access credentials, and the platform will compile and prepare it for deployment. Models built to your specifications are integrated into the platform.

Adding the custom model to the platform

In the UI, point the platform to your ZIP, choose Custom Pipeline as the model type, and add the model. The platform then unpacks the archive and loads your model. On the Simplismart platform, provide your ZIP file, choose Custom Pipeline as the model type, and add the model. The platform then unpacks the archive and loads your model.Open Add Model

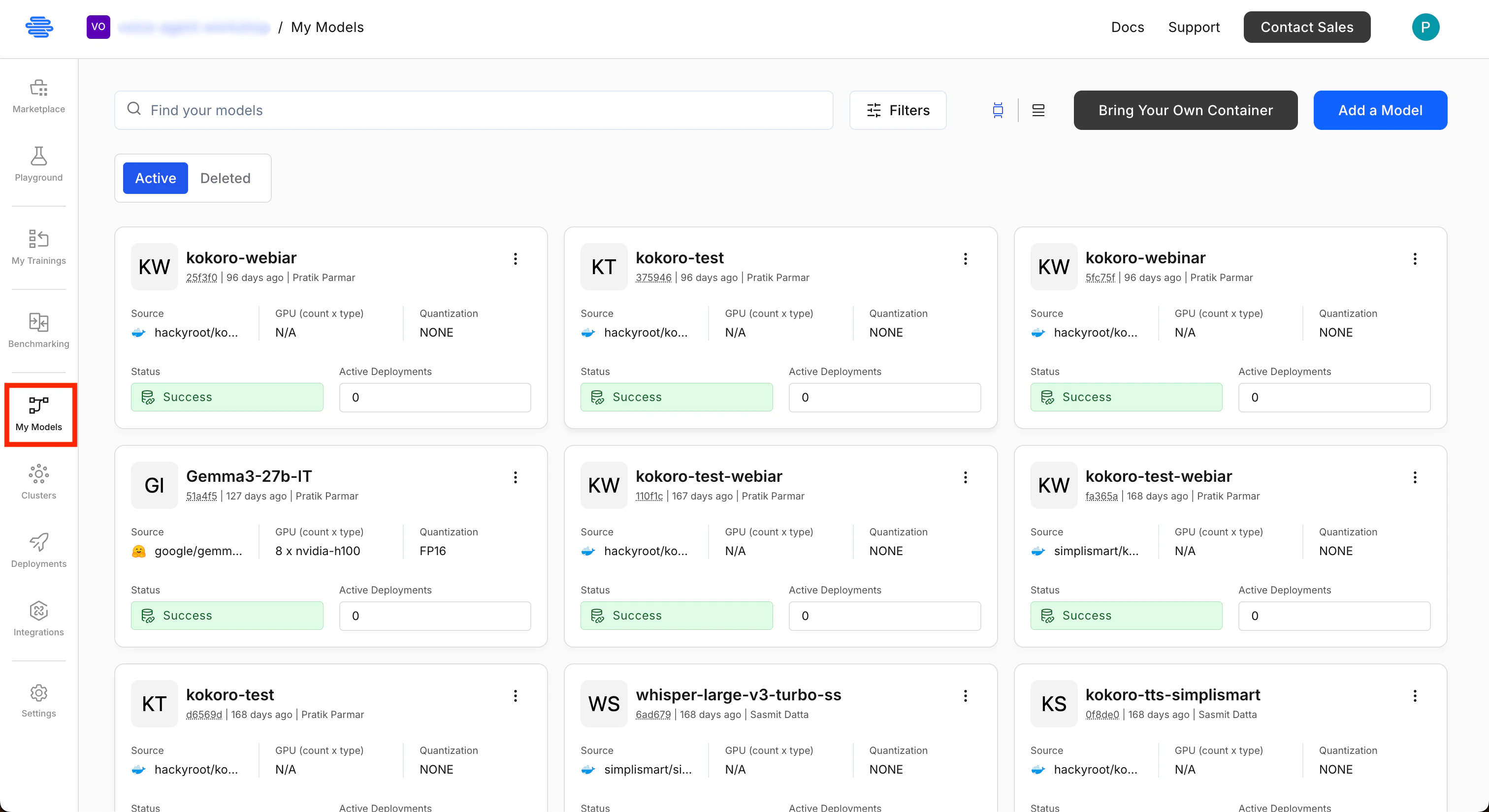

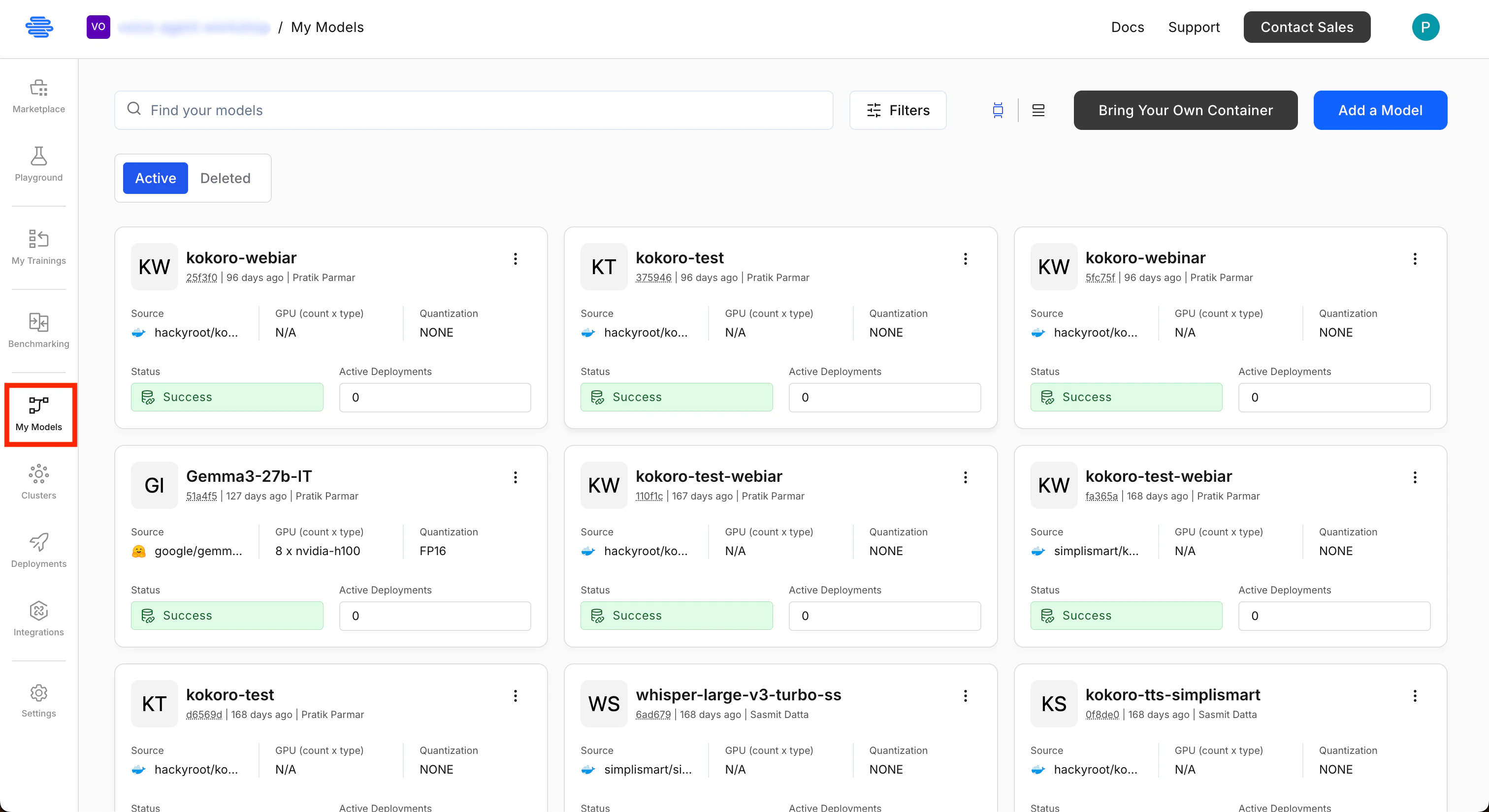

Go to My Models and click Add a Model (top-right).

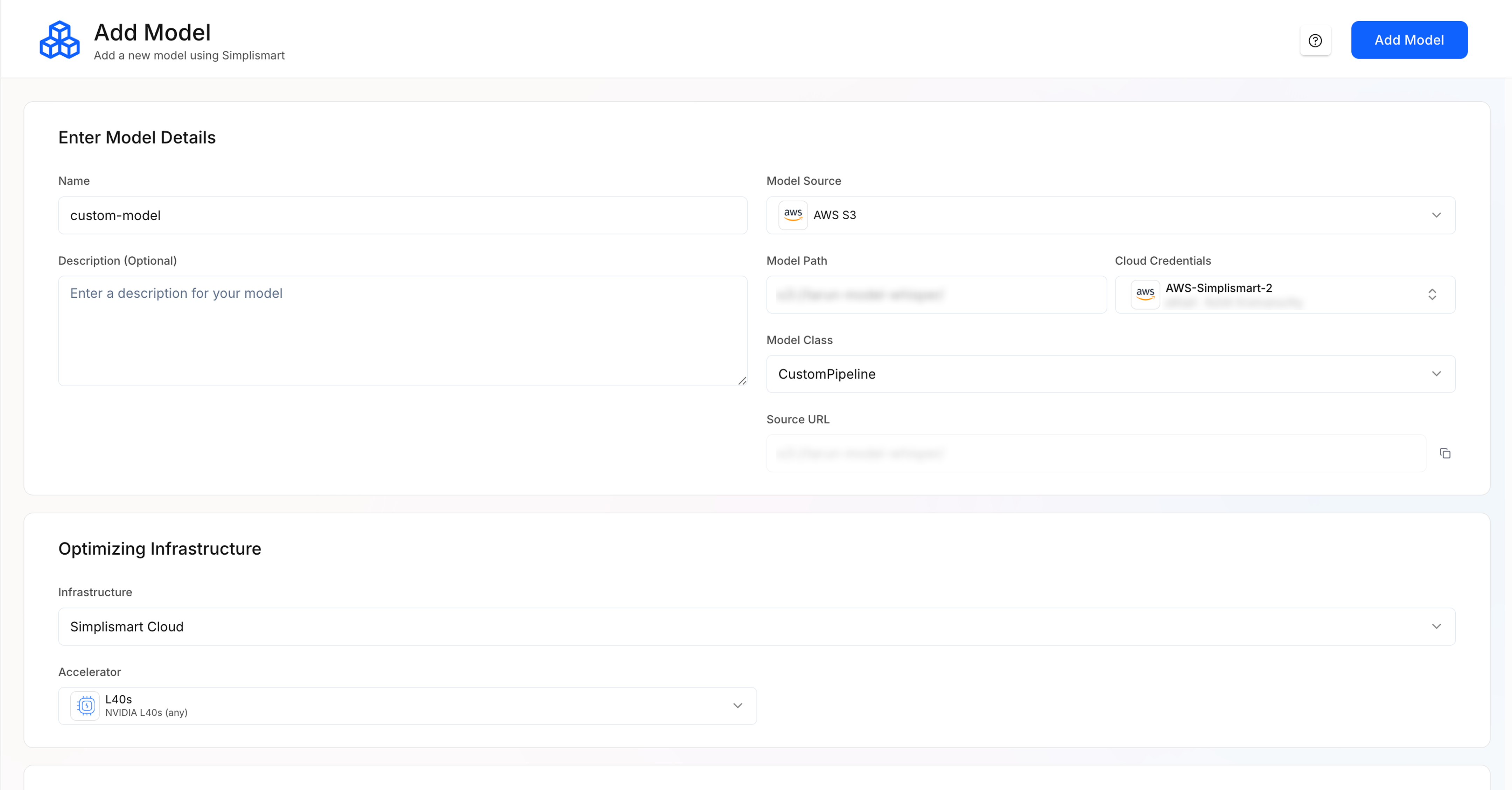

Enter model details

- Model name: A name for your model.

- Model source: Hugging Face, AWS S3, GCP GCS, or Public URL (use the source where you uploaded the ZIP).

- Model path: Path to the ZIP file (e.g. S3 URI, GCS URI, or public URL).

-

If using AWS or GCP, select the linked Cloud credentials.

Select infrastructure

Choose Simplismart Cloud or Bring Your Own Cloud. Select Accelerator type and machine type based on your model size and compute requirements.

Pipeline configuration (Optional)

Use the Pipeline Config Editor or Extra Params to tune deployment. For custom models, set

type to "custom". See the table and example below.Extra parameters (optional)

Based on your model pipeline, you can add extra parameters in JSON format under Extra Params.| Field | Type | Default | Description |

|---|---|---|---|

workers_per_device | Int | 1 | Parallel workers per device (higher can improve inference speed). |

device | string | cpu | "cpu" or "cuda". |

endpoint | string | /predict | URL path for inference requests. |

type | string | (required) | Use "custom" for custom models; other values: "whisper", "llm", "sd". |